It measures how often the four biggest AI engines (ChatGPT, Claude, Gemini, and Perplexity) pick your brand over your competitors when a buyer asks a category-defining question.

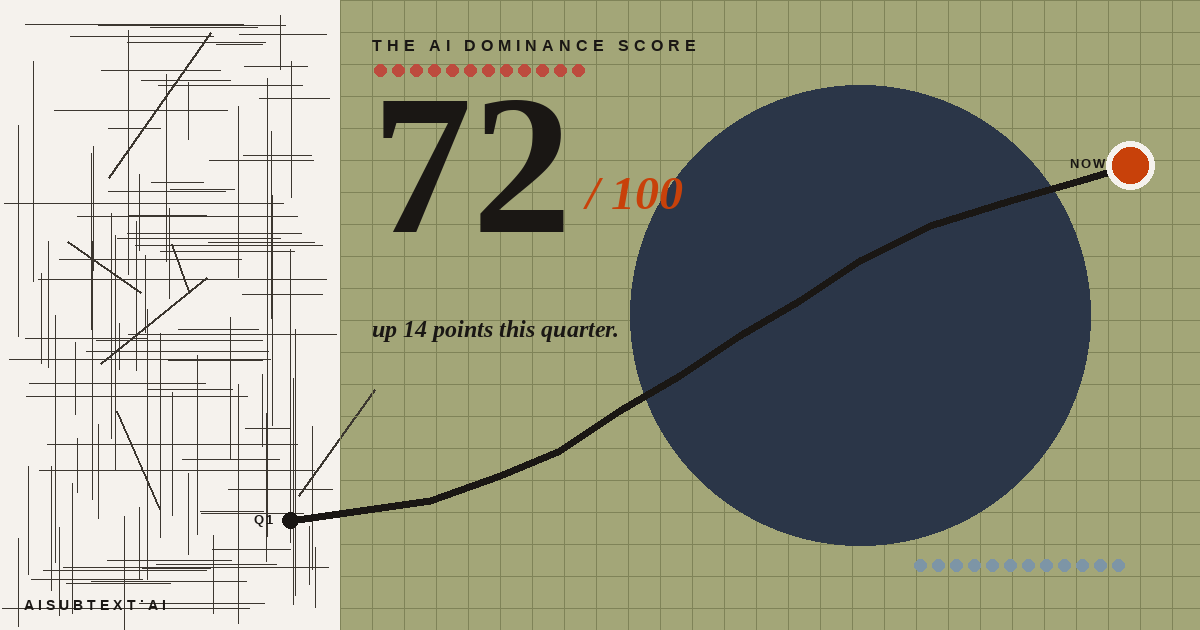

We call it the AI Competitive Score.

It runs 0 to 100. A score of 100 means AI engines recommend you nearly every time someone asks for options in your category. A score of 0 means they don't know you exist. Most B2B brands we've scanned sit somewhere in the middle, usually lower than they'd guess.

This matters because buyer behavior is shifting faster than most marketing dashboards are tracking. Last year, the question "where did this inbound lead come from?" still had a clean answer for most companies: a referral, an event, an ad, an organic search. This year, a growing slice of qualified inbound is arriving with a shortlist their buyer built by asking an AI, not Google. If you're not on those shortlists, those leads never become leads.

You can check your own AI Competitive Score in 60 seconds. If you want the conceptual ground first, keep reading.

What the score actually represents

The AI Competitive Score isn't a proxy for marketing quality or brand awareness as traditionally understood. It's more specific.

We run a standardized set of buyer-intent prompts across all four major AI engines. Questions real buyers actually ask, structured the way they actually ask them. "Best [category tool] for a [segment]." "Alternatives to [competitor]." "Who's leading in [emerging space]." We then measure how often your brand shows up in the response, where it ranks, and how the engines characterize you when they do mention you.

The result is a single number that tracks over time.

What it captures that other metrics miss:

- Per-engine variation. ChatGPT might love your brand while Claude ignores you. Gemini might recommend you for enterprise but never for SMB. A single blended number would hide that. The AI Competitive Score breaks it down by engine.

- Trend direction. One-time audits are point estimates. The AI Competitive Score updates over time, so you see whether you're gaining or losing recommendation share. Rising means more wins, more pipeline. Falling means the opposite, often long before your CRM feels it.

- Competitive context. Your score is only meaningful against the category. If your competitors move up, you're losing ground even when your own number holds. The score is tracked comparatively.

Why falling scores are more dangerous than you'd expect

The pipeline impact of a declining AI Competitive Score is invisible at first.

Here's how it plays out in practice. A CMO notices sales velocity slowing slightly, but attribution looks normal. The team assumes it's a seasonal blip or a macro issue. Three quarters later, pipeline is clearly down year-over-year, deals are stalling earlier in the funnel, and the feedback from AEs is "prospects are coming in already comparing us to vendors we've never competed with before."

That last line is the giveaway. A prospect arrived having done their research elsewhere, in an AI engine that built them a shortlist, and your brand wasn't on it. Your AE is now defending against competitors you didn't know you were in the consideration set with.

By the time this pattern is obvious in the CRM, you've been losing recommendation share for six to nine months. The score would have told you four months earlier.

The counterintuitive finding: budget doesn't buy the score

We've scanned enough B2B categories to see a clear pattern, and it's the one most CMOs find surprising.

The brand with the highest marketing budget in a category is rarely the one with the highest AI Competitive Score.

Sometimes it's in the top three. Often it's not. We've scanned categories where a well-funded market leader sat in the 30s while a smaller, more focused competitor sat in the 70s.

The explanation is structural, not about spend.

AI engines don't care how much you're paying for brand campaigns. They care about whether your brand is represented in the places they trust: review sites with proper schema, category-specific industry publications, podcasts with indexed transcripts, bylines with author metadata. They care about whether your product pages describe what you do in buyer-intent language, or in marketing prose that obscures it. They care about whether your brand name is consistent across every surface, or fragmented into four different forms that collapse into three different entities in their graph.

The top scores go to brands with the cleanest structured signals: category-specific schema, consistent naming, dense citations in trusted places.

This is good news and bad news. Good news: smaller brands with better discipline can outperform category leaders. Bad news: if you're the category leader with the biggest budget, that budget doesn't protect you.

What moves the score

Five things, in approximate order of impact:

- Category-specific schema markup. Describing your product as "software platform" gives AI engines nothing to work with. Describing it as "revenue intelligence software for B2B sales teams over 100 reps" gives them signal. Specificity wins. This is the fastest lever to pull.

- Citations in trusted category sources. G2, Capterra, TrustRadius, and other review platforms matter. So do industry-specific publications with proper schema. The engines don't just read text. They read a graph of who says what about whom, and where.

- Brand name consistency. "Acme" and "Acme, Inc." and "Acme Software" are three different entities to an LLM unless you've explicitly told it otherwise. Every surface (your site, your press releases, your podcast appearances, your executive bios) should use the same form.

- Executive and founder authority signals. Your key people are part of your brand graph. Podcast appearances with transcripts, bylines with proper author metadata, conference talks indexed correctly, LinkedIn profiles with consistent brand references. Engines can read this graph.

- Use-case clarity on your product pages. Who is this for? What size team? What problem does it solve? The engines need explicit answers, not marketing prose. A product page that reads like a brochure is invisible to them.

Three of these you can improve yourself. Two require a scan to know where you stand, because schema quality and citation density aren't things most marketing teams audit directly.

How to check your own AI Competitive Score

The fastest path is a free scan at aisubtext.ai. Takes 60 seconds. You'll get your per-engine score, how you rank in your category, and a prioritized Fix List identifying which of the five signals are weakest for your brand.

We built it as a free starting point because the first conversation with a CMO who hasn't encountered this category before needs to happen in their own data, not in a generic deck. Once you see your own score, the conversation about what to do with it becomes concrete.

What to watch, going forward

If you decide this is a metric worth tracking (and we obviously think it is), the minimum cadence that actually catches pipeline risk is monthly. Not daily. Daily fluctuations are noise. Not quarterly. By the time you notice a quarterly trend, you're two quarters into losing deals.

Monthly gives you enough signal to notice real movement without drowning you in variance.

Pair it with whatever competitive intel you're already running and the pattern becomes readable: when the score moves, who's eating your lunch, and what specifically they're doing differently in the five signals. If you want category-wide context, the AI Brand Index tracks how every major B2B brand ranks across the same engines.

Most B2B marketing organizations are still 12 months away from tracking this. That's the window the ones who move now get to operate in.